However, the choice of best-of-breed laboratory instruments and instrument systems can present challenges when it comes to getting everything to work together in a seamless way. The final part of this chapter will look at the issue of standard data interchange formats, the extent of the challenge, and some of the initiatives to address them

Simple laboratory instruments

Devices such as analytical balances and pH meters use low-level processing to carry out basic functions that make them easier to work with. The tare function on a balance avoids a subtraction step and makes it much easier to weigh out a specific quantity of material.

Connecting them to an electronic lab notebook (ELN), a laboratory information management system (LIMS), a lab execution system (LES), or a robot, adds computer-controlled sensing capability that can significantly off-load manual work. Accessing that balance through an ELN or LES permits direct insertion of the measurement into the database and avoids the risk of transcription errors. In addition, the informatics software can catch errors and carry out calculations that might be needed in later steps of the procedure.

The connection between the instrument and computer system may be as simple as an RS-232 connection or USB. Direct Ethernet connections or connections through serial-to-Ethernet converters can offer more flexibility by permitting access to the device from different software systems and users. The inclusion of smart technologies in instrumentation significantly improves both their utility and the labs’ workflow.

Computerised instrument systems

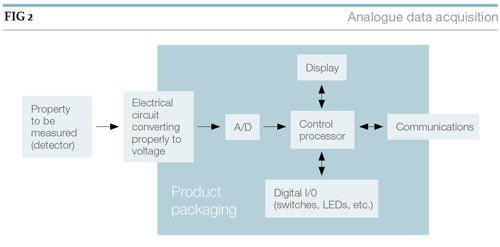

The improvement in workflow becomes more evident as the level of sophistication of the software increases. It is rare to find commercial instrumentation that doesn’t have processing capability either within the instruments’ packaging or, through a connection to an external computer system.

The choice of dedicated computer-instrument combinations vs. multi-user, multi-instrument packages is worth careful consideration. The most common example is chromatography, which has options from both instrument vendors and third-party suppliers.

One of the major differences is data access and management. In a dedicated format, each computer’s data system is independent and has to be managed individually, including backups to servers.

It also means that searching for data may be more difficult. With multi-user/instrument systems there is only one database that needs to be searched and managed.

If you are considering connecting the systems to a LIMS or ELN, make the connections as simple as possible. If an instrument supported by the software needs to be replaced, changing the connection will be simpler.

Licence costs are also a factor. Dedicated formats require a licence for each system. Shared-access systems have more flexible licensing considerations. Some have a cost per user and connected instrument; others have a cost per active user/instrument schedules.

In the latter case, there are eight instruments and four analysts, of which only half may be simultaneously active, licenses for only four instruments and two users are needed.

One factor that needs attention is the education of laboratory staff in the use of computer-instrument systems.

While instrument software systems are capable of doing a great deal, their ability to function is often governed by user-defined parameters that affect, at least in chromatography, baseline-corrections, area allocation for unresolved peaks, etc. Carefully adjusted and tuned parameters will yield good results, but problems can occur if they are not managed and checked for each run.

Instrument data management

The issue of instrument data management is a significant one and requires considerable planning. Connecting instruments to a LIMS or ELN is a common practice, though often not an easy one if the informatics vendor hasn’t provided a mechanism for interfacing equipment. Depending on how things are set up, only a portion of the information in the instrument data system is transferred to the informatics system.

If the transfer is the result of a worklist execution of a quantitative analysis, only the final result may be transferred – the reference data still resides on the instrument system. The result is a distributed data structure. In regulated environments, this means that links to the backup information have to be maintained within the LIMS or ELN, so that it can be traced back to the original analysis.

The situation becomes more interesting when instrument data systems change or are retired. Access still has to be maintained to the data those systems hold. One approach is virtualising the instrument data system so that the operating system, instrument support software, and the data are archived together on a server. (Virtualisation is, in part, a process of making a copy of everything on a computer so that it can be stored on a server as a file or ‘virtual container’ and then executed on the server without the need for the original hardware. It can be backed up or archived, (so that it is protected from loss). In the smart laboratory, system management is a significant function – one that may be new to many facilities. The benefits of doing it smartly are significant.

Computer-controlled experiments and sample processing

Adding intelligence to lab operations isn’t limited to processing instrument data, it extends to an earlier phase of the analysis: sample preparation. Robotic systems can take samples – as they are created – and transfer the format to that needed by the instrument. Robotic arms – still appropriate for many applications – have been replaced with components more suitable to the task, particularly where liquid handling is the dominant activity, as in life science applications.

Success in automating sample preparation depends heavily on thoroughly analysing the process in question and determining:

- Whether or not the process is well documented and understood (no undocumented short-cuts or workarounds that are critical to success), and whether improvements or changes can be made without adversely impacting the underlying science;

- Suitability for automation: whether or not there are any significant barriers (equipment, etc.) to automation and whether they can be resolved;

- That the return in investment is acceptable and that automation is superior to other alternatives such as outsourcing, particularly for shorter-term applications; and

- That the people implementing the project have the technical and project management skills appropriate for the work.

The tools available for successfully implementing a process are clearly superior to what was available in the past. Rather than having a robot adapt to equipment that was made for people to work with, equipment has been designed for automation – a major advance. In the life sciences, the adoption of the microplate as a standard format multi-sample holder (typically 96 wells, but can have 384 or 1,536 wells – denser forms have been manufactured) has fostered the commercial availability of readers, shakers, washers, handlers, stackers, and liquid additions systems, which makes the design of preparation and analysis systems easier. Rather than processing samples one at a time, as was done in early technologies, parallel processing of multiple samples is performed to increase productivity.

Another area of development is the ability to centralise sample preparation and then distribute the samples to instrumentation outside the sample prep area through pneumatic tubes. This technology offers increased efficiency by putting the preparation phase in one place so that solvents and preparation equipment can be easily managed, with analysis taking place elsewhere. This is particularly useful if safety is an issue.

Across the landscape of laboratory types and industries, the application of sample preparation robotics is patchy at best. Success and commercial interest have favoured areas where standardisation in sample formats has taken place.

The development of microplate sample formats, including variations such as tape systems that maintain the same sample cell organisation in life sciences, and standard sample vials for auto-samplers, are common examples. Standard sample geometries give vendors a basis for successful product development if those products can have wider use rather than being limited to niche markets.

Putting the pieces together

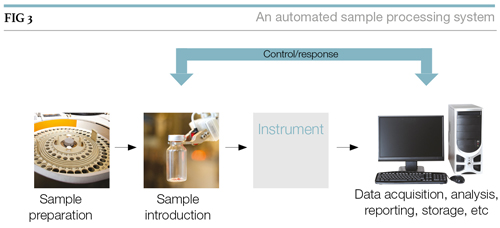

It’s not enough to consider in isolation sample preparation, the introduction of samples into instruments, the instruments themselves, and the data systems that support them. Linking them together provides a train of tasks that can lead to an automated sample processing system as shown in Figure 3.

The control/response link is needed to synchronise sample introduction and data acquisition. Depending on the nature of the work, that link can extend to sample preparation. The end result is a system that not only provides higher productivity than manual methods, but does so with reduced operating costs (after the initial development investment).

However, building a smart laboratory needs to look beyond commonplace approaches and make better use of the potential that exists in informatics technologies. Extending that train of elements to include a LIMS, for example, has additional benefits. The initial diagram above would result in a worklist of samples with the test results that would be sent to a LIMS for incorporation into its database.

Suppose there was a working link between a LIMS and the data system that would send sample results individually, and that each sample processed by the instrument would wait until the data system told it to go ahead. The LIMS has the expected range for valid results and the acceptable limits. If a result exceeded the range, several things could happen:

- The analyst would be notified;

- The analysis system would be notified that the test should be repeated; • If confirmed, standards would be run to confirm that the system was operating properly; and

- If the system were not operating according to SOPs, the system would stop to avoid wasting material and notify the analyst.

The introduction of a feedback facility would significantly improve productivity.

At the end of the analysis, any results that are outside expected limits would have been checked and the systems integrity verified. Making this happen depends on connectivity and the ability to integrate components.

Instrument integration

In order for the example described above to work, components must be connected in a way that permits change without rebuilding the entire processing train from scratch. Information technology has learned those lessons repeatedly as computing moved from proprietary products and components to user friendly consumer systems.

Consumer level systems aren’t any less capable than the earlier private-brand-only systems, they are just easier to manage and smarter in design.

Small Computer Systems Interconnect, Firewire and Universal Serial Bus are a few examples of integration methods that enabled the user to extend the basic capability and have ready access to a third-party market of useful components. It also allowed the computer vendors to concentrate on their core product and satisfy end-user needs through partnerships; each vendor could concentrate on what they did best and the resulting synergy gave the users what they needed.

Now these traditional methods are being surpassed by the IOT or wireless connected devices but the argument for connecting devices still remains the same – is the value added worth the investment? The answer depends on the instrument, but generally it is more effective to connect the most widely used instruments such as PH meters and weighing scales.

Connections are only part of the issue. The more significant factor is the structure of the data that is being exchanged: how it is formatted; and the organisation of the content. In the examples above, that is managed by the use of standard device drivers or, when called for, specialised device handlers that are loaded once by the user.

In short, hardware and software are designed for integration, otherwise vendors find themselves at a disadvantage in the marketplace.

Laboratory software comes with a different mindset. Instrument support software was designed first and foremost to support the vendor’s instrument and provide facilities that weren’t part of the device, such as data analysis. Integration with other systems wasn’t a factor.

That is changing. The increasing demand for higher productivity and better return on investment has resulted in the need for systems integration to get overall better systems performance; part of that measure is to reduce the need for human interaction with the system. Integration should result in:

- Ease-of-use: integrated systems are expected to take less effort to get things done;

- Improved productivity, streamlined operations: the number of steps needed to accomplish a task should be reduced;

- Avoiding duplicate data: no need to look in multiple places;

- Avoiding transcription errors: integration will result in electronic transfers that should be accurate; this avoids the need to enter and verify data transfers manually;

- Improving workflow and the movement of lab data: reducing the need for people to make connections between systems – integration facilitates workflow; and • More cost-effective, efficient lab operations.

The problem of integration, streamlining operations, and better productivity has been addressed via automation before: in manufacturing applications and clinical labs.

In the 1980s, clinical lab managers recognised the only way they were going to meet their financial objectives was to use automation to its fullest capability and drive integration within their systems.

The programme came under the title ‘Total laboratory automation’ and resulted in a series of standards that allowed instrument data systems to connect with Laboratory Information Systems (equivalent to LIMS) and hospital administrative systems.

Those standards were aggregated under HL7 (www.hl7.org), which provides both message and data formatting. While hospital and clinical systems have the advantages of a more limited range of testing and sample types, making standardisation easier, there is nothing in their structure to prevent them being applied to a wider range of instruments, such as mass spectrometry.

An examination of the HL7 structure suggests that it would be a good foundation for solving integration problems in most laboratories.

From the standpoint of data transfer and communications, the needs of clinical labs match those in other areas. The major changes would be in elements, such as the data dictionaries, and field descriptions, which are specific to hospital and patient requirements.

A cross-industry solution would benefit vendors as it would simplify their engineering and support, provide a product with wider market appeal, and encourage them to implement it as a solution.

Most of the early standards work carried out outside the clinical industry has focused on data encapsulation, while more recent efforts have included communications protocols:

- In the 1990s, efforts by instrument vendors led to the development of the andi standards (Analytical Data Interchange) which resulted in ASTM E1947 – 98(2009) Standard Specification for Analytical Data Interchange Protocol for Chromatographic Data, which uses the public domain netCDF data base structure, providing platform independence. This standard is supported in several vendor products but doesn’t see widespread use;

- SiLA Rapid Integration (www.sila-standard.org). The website states: ‘The SiLA consortium for Standardisation in Lab Automation develops and introduces new interface and data management standards, allowing rapid integration of lab automation systems. SiLA is a not-for-profit membership corporation with a global footprint and is open to institutions, corporations and individuals active in the life science lab automation industry. Leading system manufacturers, software suppliers, system integrators and pharma/biotech corporations have joined the SiLA consortium and contribute in different technical work groups with their highly skilled experts’;

- The Pistoia Alliance (www.pistoiaalliance.org) states: ‘The Pistoia Alliance is a global, not-for-profit precompetitive alliance of life science companies, vendors, publishers and academic groups that aims to lower barriers to innovation by improving the interoperability of R&D business processes. We differ from standards groups because we bring together the key constituents to identify the root causes that lead to R&D inefficiencies and develop best practices and technology pilots to overcome common obstacles’;

- The Allotrope Foundation (www.allotrope.org): ‘The Allotrope Foundation is an international association of biotech and pharmaceutical companies building a common laboratory information framework (‘Framework’) for an interoperable means of generating, storing, retrieving, transmitting, analysing and archiving laboratory data and higher-level business objects such as study reports and regulatory submission files’; and

- The AnIML markup language for analytical data (animl.sourceforge.net) is developing a standard specification under the ASTM (www.astm.org/DATABASE.CART/WORKITEMSWK23265.htm) that is designed to be widely applicable to instrument data. Initial efforts are planned to result in implementations for chromatography and spectroscopy.

A concern with the second, third and fourth points above is that they are primarily aimed at the pharmaceutical and biotech industries. While vendors will want to court that market, the narrow focus may slow adoption since it could lead to the development of standards for different industries, increasing the implementation and support costs. Common issues across industries and applications lead to common solutions.

The issue of integrating instruments with informatics software is not lost on the vendors. Their product suites offer connection capabilities for a number of instrument types to ease the work.

Planning a laboratory’s information handling requirements should start from the most critical point, a LIMS for example, and then on to support additional technologies.

Chapter summary

The transition from processing samples and experiment manually to the use of electronic systems to record data is a critical boundary. It moves from working with real things to their digital representation in binary formats. Everything else in the smart laboratory depends on the integrity and reliability of that transformation.

The planners of laboratory systems may never have to program a data acquisition system, but they do have to understand how such systems function, and what the educated lab professional’s role is in their use. Such preparatory work will enable the planners to take full advantage of commercial products.

One key to improving laboratory productivity is to develop an automated process for sample preparation, introducing the sample into the instrument, making measurements, and then forwarding that data into systems for storage, management, and use. Understanding the elements and options for these systems is the basis for engineering systems that meet the needs of current and future laboratory work.

The development of the smart laboratory is at a tipping point. As users become more aware of what is possible, their satisfaction with the status quo will diminish as they recognise the potential of better-designed and integrated systems.

Realising that potential depends on the same elements that have been successful in manufacturing, computer graphics, electronics, and other fields: an underlying architecture for integration, based on communications and data encapsulation/interchange standards.

Next: Information: Laboratory informatics tools >