Over the next five years, we want to make sure every scientist and engineer has access to the computing power he needs, from the individual desktop, to the department, to whole company clusters,’ says Bjorn Tromsdorf, the high-performance computing product and solutions manager of EMEA Microsoft.

And when a company like Microsoft makes a statement like that, you know high performance computing is headed for the mainstream.

It is part of a wider trend throughout the scientific community, with research groups everywhere either setting up their own parallel computing resources or taking part in grid infrastructures that make use of computing power spread across continents.

Only this April, the British Particle Physics and Astronomy Research Council awarded £30m to the international GridPP that will help process the high volumes of data to be generated by the Large Hadron Collider later this year (see panel).

The sky is the limit with what these systems can do, quite literally. Researchers from the Universidad Autónoma de Madrid have created their own universe, 500 Megaparsecs in diameter and consisting of 2bn objects, using the MareNostrum supercomputer in Barcelona, to try to answer profound questions about how our universe formed and evolved.

The simulations examine collisions between the universe’s stellar dust and the elusive dark matter – something that scientists at present know very little about.

‘In astrophysics, there is no other way to answer these questions,’ says Francesc Subirada, the associate director of the Barcelona Supercomputing Centre where MareNostrum is housed. ‘How can we know ever know the map of the universe at the start of time?’

The only answer is to assume a model, simulate the processes based on this and then compare the predicted data with the current state of the universe. Of course, this takes a huge number of calculations that would take thousands of years even on the world’s most powerful processors. Instead, the calculations are split over 10,240 processors working in tandem to vastly decrease the time taken to perform the job.

It is for this reason the MareNostrum has been named the fifth most powerful computer in the world, and easily the most powerful in Europe.

However, it’s not just world-class systems that are answering questions about our Universe: many research teams are harvesting the power of much smaller parallel computers to analyse the huge streams of data supplied by modern telescopes. The Cambridge Astronomical Survey Unit of Cambridge University is one such group.

‘We’re interested in stars for their bodies, not for their minds,’ says Jim Lewis, senior research associate at the unit. Instead, his team take a statistical approach to classify stellar objects. To do this, they have just started to use the WX2 database from Kognitio to analyse up to 500Gb of telescope data each night.

The parallel approach has allowed increasingly complex queries, even on unindexed properties of the stars. The high power of Kognitio’s software has proven particularly useful when classifying faint objects shown in the telescope images, a problem exacerbated by distortions caused by the Earth’s atmosphere.

It is also being used to interrogate and cross-reference the vast online astronomical databases. ‘Large area surveys are taking over astronomy,’ says Lewis. ‘We are in danger of drowning in data.

‘Using WX2, the parallel programming is hidden from you. There are a few tricks in forming SQL queries, but it is all very, very standard.’ He has highlighted a key feature of the explosion of HPC software landing in research labs: scientists don’t want to become expert programmers.

It is for this reason that companies such as Microsoft, The Mathworks and Wolfram are trying to soften the blow using a variety of techniques. Microsoft provides an easy infrastructure to run computer clusters with Microsoft Compute Cluster Server 2003.

‘To install and maintain a cluster used to be very complex,’ says Bjorn Tromsdorf, the HPC product and solutions manager at EMEA Microsoft. ‘Now the MPI [message passing interface] is wizard based, allowing smaller companies [without programming expertise] to use the system.’

In addition, researchers don’t want to change from the programs they have been using all their working lives, such as Matlab from The Mathworks. In response to this, The Mathworks has released the Distributed Computing Toolbox to executing parallel programs from within the familiar Matlab interface.

‘We hope people will do parallel computing from their desktop,’ says Jos Martin, the team leader for the Distributed Computing Toolbox. ‘Machines are becoming clusters in their own right: soon everyone will have 64 core processors. We’re becoming much more adept at making it usable.’

Wolfram, the manufacturer of Mathematica, has also produced a parallel computing module, gridMathematica, for its successful mathematics package. In addition to parallel numerical calculations, it can provide exact symbolic manipulation, in a fraction of the time.

Raman Maeder of Wolfram believes that multi-core processors are the future of HPC for most scientists. ‘Nearly every new computer now has two cores, which could increase to four by next year.’

Interactive Supercomputing (ISC) is a company that specialises in providing the invisible bridge between desktop scientific applications (including Matlab) and parallel computing. ‘The scientists don’t need to stop their science and hand their work over to the programmers,’ says Ilya Mirman, vice president of marketing at ISC. ‘They are in control.’

It is telling that ISC was founded by an applied mathematics professor at the Massachusetts Institute of Technology.

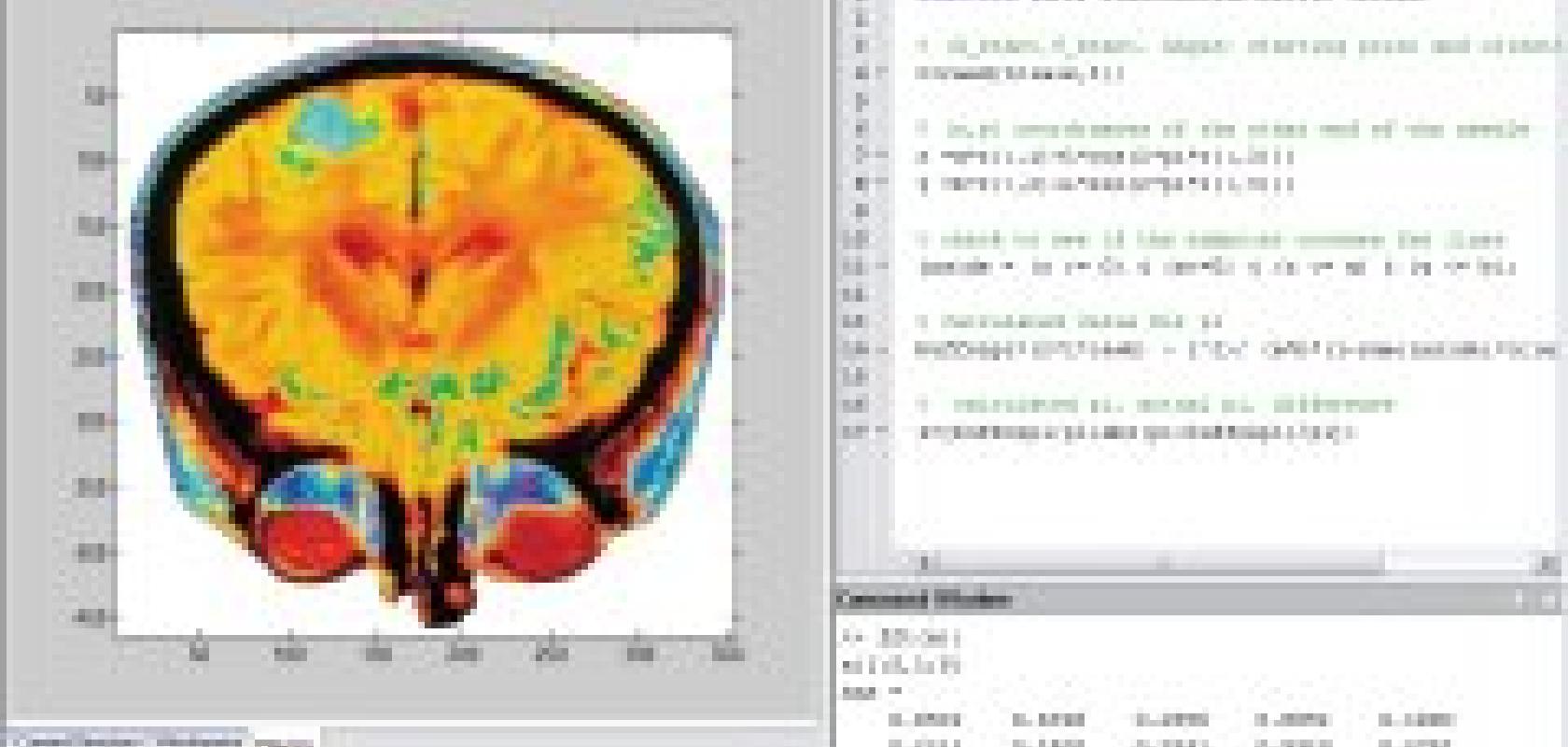

A high-resolution brain scan made possible with Star-P from Interactive Supercomputing

As Microsoft’s Tromsdorf pointed out, the benefits of HPC are not limited to the physical sciences. Mirman explained how ISC’s Star-P software is currently being used at the USA’s National Cancer Institute to plumb genetics data to find factors that contribute to the disease. Another important application is image analysis, and Star-P provides a means of processing higher resolution MRI brain scans, typically to provide a detailed picture of the transmission of drugs over time.

‘It’s not just faster,’ says Mirman, ‘it changes the way you think about the data.’ A team of ecologists at the University of California at Santa Barbara has also made use of Star-P to better plan conservation efforts, by studying the effects of landscape on the migration of wolverines and pumas in southern California. Rather than just measuring the physical distance, the research team created a complex model with millions of regions that accounted for the resistance posed by mountains, rivers and forests on the animal’s travel.

I started this article by stating the sky is the limit for parallel computing, but while it seems the software will soon be capable of dealing with whatever is put in its path, it may be the way we power the hardware that is the biggest hurdle. ‘It may eventually be the electrical power that limits what we can do,’ says Subirada of the Barcelona Supercomputing Centre. ‘Currently, MareNostrum consumes more power than the whole of the university campus.’

Cambridge researchers predict pill absorption using Newton's Law - and HPC

We’ve seen how researchers at the University of Cambridge have used parallel computing to understand objects at the far end of the cosmos, but materials scientists at the university have successfully used HPC to accurately understand particles at a much smaller scale.

The Pfizer Institute, a joint venture between the university, the Cambridge Crystallographic Data Centre and Pfizer, studies the interactions between the minute granules in tablets, to predict the physical properties, such as solubility, that determine how the drug is delivered to the body.

Rather than studying the molecular properties of the drugs, the group’s software uses a finite element method to examine their mechanical properties under Newton’s laws, to find the perfect blend of carrier and active ingredient. The calculations are so intricate that some of the simulations still take months to perform, even using an HPC cluster designed and implemented by Cambridge Online.

‘At a worst case scenario, we have reduced formulation time by a factor of two or three,’ says Dr James Elliot, the leader of the Materials Modelling Group. The approach has been so successful that Pfizer are now considering the integration of granular analyses into their own research, something they’d never done before.

‘I am absolutely certain the future of scientific computing is multi-core processing, but software must be written to exploit it,’ says Elliot.

High-energy physics has always required the very latest in computing technology: 16 years ago CERN had just publicised the new World Wide Web project and later this year, as the Large Hadron Collider (LHC) finally becomes active, it will use an international grid to process enough data in one year to fill a 20km high tower of CDs.

Dr David Britton, from Imperial College London’s Department of Physics, who played a key part in creating the grid, says: ‘Processing, analysing and storing this data on one site would involve constructing enormous computing facilities, so instead we are developing the grid to ensure that computing resources all over the planet can be used to carry out reconstruction and analysis jobs.’

The particle Physics and Astronomy Research Council has awarded £30m to develop the British arm of the grid, which will include 20,000 processors by 2011.

Elsewhere, HPC is helping to design another huge particle accelerator, this time at the proposed 40km long International Linear Collider, whose data it is hoped will complement the LHC. Researchers at Queen Mary College at the University of London are using Matlab operations in parallel to simulate the path of the high-energy particles through the accelerators.

The beams are just 5nm wide and must collide along their 20km-long paths at velocities approaching the speed of light. It is clearly an operation that requires excruciating accuracy and the design must cater for such tiny disturbances as those caused by the Earth’s rotation and the gravitational pull of the moon.

A possible design of the International Linear Collider. Image courtesy of SLAC.