Babbage's Analytical Engine (1834) was conceived as a general purpose calculating machine and, in 1836, he imagined a version of this machine that could manipulate symbols as well as numbers[1]. The first computer programs designed to carry out symbolic computations were described in 1953 by Kahrimanian[2] and Nolan[3]. Since then, many general tools for symbolic and numeric computation have been developed. They include REDUCE (1968), Macsyma (1970), Maple (1983), MATLAB (1984), and Mathematica (1988).

These tools have been refined and improved over the years, and are now very powerful computational aids for all sorts of problems in science, engineering and technology. More generally they are finding applications outside of traditional scientific computing - in finance, for example. And so, there is a problem. How does one judge which package is 'best'? Is there a way to measure performance that will help potential users make a better informed purchase?

Tests currently in the literature

One way to compare packages is to subject them to a suite of benchmark problems and note the CPU-times required for solving the problems as well as the quality of the output. In 1999 Wester[4] published the results of a comprehensive benchmarking exercise. He tested seven packages with a suite of 542 problems, divided into 30 categories, that ranged over matrix theory, special functions, partial differential equations, and Boolean Logic. He uses 15 ways to assess the answers produced ranging from 'could not do problem' to 'minimal success' or 'a fatal program error occurred'. His test results are available at: http://math.unm.edu/~wester/cas_review.html.

But seven years is a long time in the world of software and the packages studied by Wester have now gone through numerous upgrades. Wester's results are now out of date, but they provide a model for a thorough and comprehensive suite of tests and a broad range of measures of success.

In 2004 Steinhaus[5] compared nine programs under the headings: mathematical functionality, graphical functionality, programming environment, data handling, availability and speed.

Neither Wester nor Steinhaus conclude that a particular package is 'best' overall, but that a particular version has great strengths and some weaknesses.

The Symbolic Data Project (www.SymbolicData.org) is a recent attempt to systematise and standardise benchmarking for computer algebra systems. According to Olaf Bashmann (www.symbolicdata.org/Papers/linz-06.pdf) the Symbolic Data Project has the following three main goals: '(a) to systematically collect existing symbolic computation benchmark data and to produce tools to extend and maintain this collection; (b) to design and implement concepts for trusted benchmarks computations on the collected data; and (c) to provide tools for data access /selection / transformation using different technologies.' This is a fascinating initiative and if it achieves its goals it will provide an extremely useful reference for potential buyers.

Mathematica has recently launched the Wolfram Technology Guide (www.wolfram.com/technology/guide) with the intention of showing there is more to technical computing than a list of features. The purpose of this guide is to give users a look behind the scenes into 35 topics, such as automatic algorithm selection, extended precision computation, piecewise functions, and sparse arrays.

The SIAM 100-digit challenge

Perhaps the 10 problems in the SIAM 100-digit challenge[6] could be taken to comprise a benchmark. They were devised by Nick Trefethen of Oxford University and were offered as a challenge to the readers of SIAM News (Vol. 35, No. 1) in early 2002. (www.siam.org/siamnews/01-02/challenge.pdf). The high-precision numerical computations required to solve these problems can serve as a test of a program's algorithms and numerical computing ability. They range from evaluating a tricky integral to solving the partial differential equations that describe temperature distribution across a heated heat plate. Contestants obtained solutions using many systems including Mathematica http://mathworld.wolfram.com/Hundred-DollarHundred-Digit ChallengeProblems.html and, Maple www.math.ubc.ca/~israel/challenge/challenge1.html.

Several contestants published their solutions e.g. http://web.comlab.ox.ac.uk/oucl/work/nick.trefethen/hundred.html. At least two of the winning teams obtained all the answers with Mathematica, sometimes with a single command. See www.stanwagon.com and http://mathworld.wolfram.com/news/2002—5-25_challenge.

Other winners used Maple (www.maplesoft.com) but commented, for example, that some numerical integrals needed to be broken into several pieces and each piece transformed in a different way, whereas Mathematica seems to do this automatically, http://groups.google.com/group/sci.math.symbolic/msg/0a63bdf2fb9e8dcd? dmode=source.

Comparing the time it takes for a package to solve one of these problems (typically much less than a second) is not very useful as it depends on the specification of the computer used and the way the solution is implemented.

Problems from RNC 7

Another set of 16 challenging problems on exact real arithmetic software was published in July 2006[7] http://rnc7.loria.fr/competition.html. The goal is to measure the performance of numerical computing packages on a set of challenging problems, emphasising accuracy rather than timings. Of the packages considered in this article, only Mathematica seems to have competed and their team submitted the only perfect score.

Global optimisation

Alternatively, optimisation or global optimisation could also be used as a benchmark. Recently, The Mathworks launched the 'Genetic Algorithm and Direct Search Toolbox' for optimisation problems. The algorithms in this toolbox are based on a kind of natural selection that randomly selects individuals from a population of initial solutions to create a new population. This new population then becomes the initial solution and the process continues until the population evolves to an optimal solution. You can then use traditional optimisation techniques to refine your solution.

MATLAB, Mathematica, and Maple come with software that can solve a wide range of day-to-day local optimisation problems. Many optimisation algorithms, like Newton Raphson, are based on the 'downhill' methods, i.e. the algorithm keeps searching lower and lower until it reaches a minimum. These algorithms are designed to find local optima of functions that are continuous and differentiable. Maple's built-in local optimisation package supports linear, quadratic and nonlinear problems, and both linear and nonlinear least-squares problems. Maple will find global optima for univariate functions as well as local optima for multivartiate functions.

Global optimisation is a far more difficult problem, beset with difficulties such as multiple local minima, non-differentiability, and complicated constraints. Mathematica has the built-in function NMinimize[ ] for finding global optima. NMinimize[ ] uses the Nelder Mead method by default but it can also use Simulated Annealing or Differential Evolution.

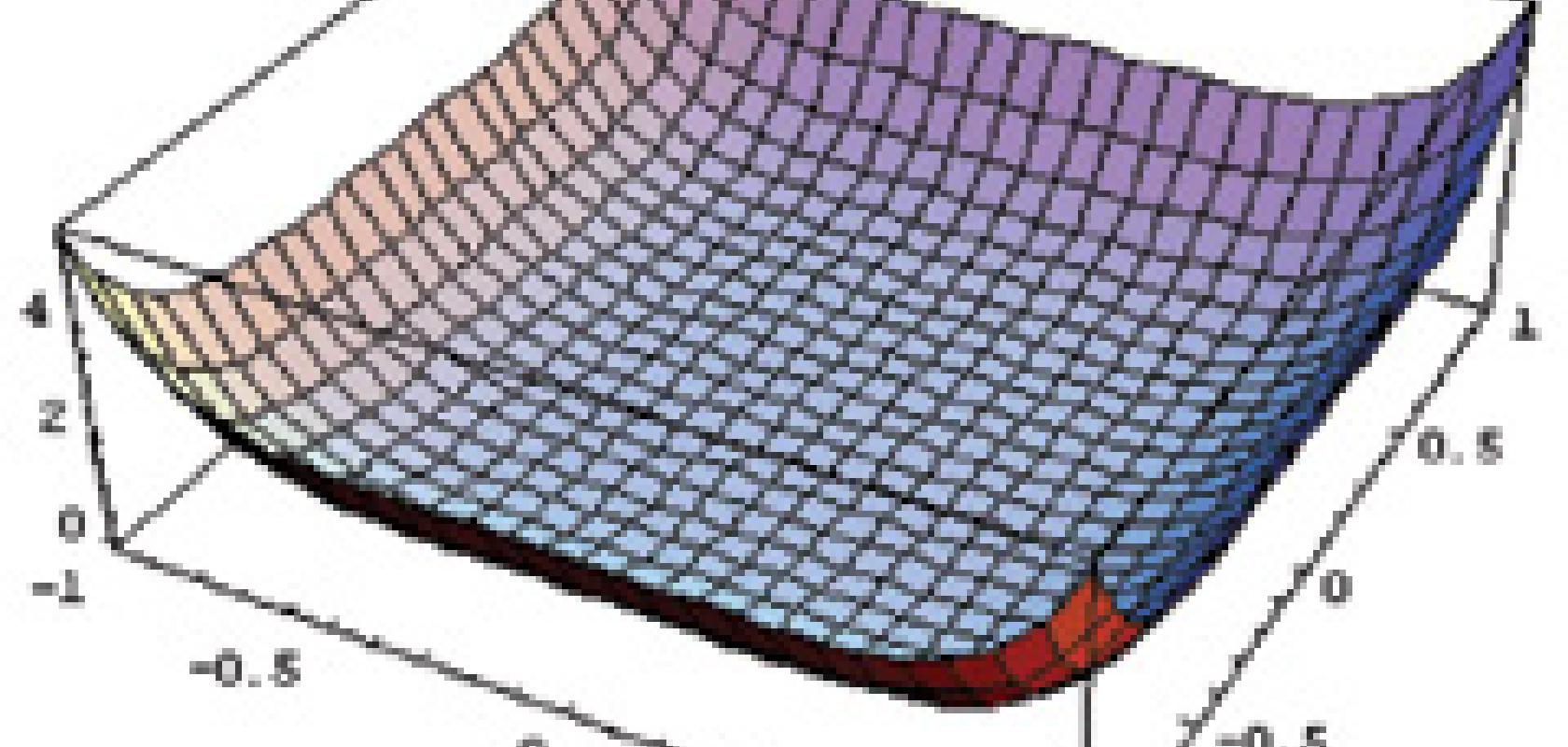

Examples of typical functions that can be minimised by NMinimize[ ] are: the wavy function |2 (x - 24) + (x - 24) Sin (x - 24)|, discontinuous functions, and the ashtray function f (x,y) = x6 (2.0 + Sin (1/x)) + y6 (2.0 + Sin (1/y)). The ashtray function looks like:

NMinimize[ ] can find the global minimum of both the wavy function above as well as Rastrigin's function: 20+x21 + x22 - 10 (Cos (2πx1) + (Cos (2πx2):

and the function in Problem 4 of the SIAM 100-digit challenge http://www.siam.org/siamnews/01-02/challenge.pdf:

f (x,y) = exp(Sin(50x)) + Sin(60exp(y)) + Sin(70Sin(x)) + Sin(Sin80(y)) - Sin(10(x+y)) + 1/4 (x22) + y

Numerically, global optimisation problems are extremely difficult. They arise in many areas of engineering, business, planning and finance and they can consist of many thousands of variables and constraints.

Even more difficult global optimisation problems require specialised software for their solution and these problems offer a good test of a system's numerical abilities. 'Global Optimization 5.2'[8] (www.wolfram.com/products/applications/globalopt/) is a package designed to enhance Mathematica's built-in optimisation functionality. Global Optimization is a collection of 10 functions for constrained and unconstrained global nonlinear optimisation. It uses Mathematica as an interface for defining nonlinear systems to be solved and for computing function numeric values. Any function computable by Mathematica can be used as input. A useful feature of these commands is that the initial search range given by the user is not binding - it is merely a suggestion for starting the search.

An interesting example of the commands available in Global Optimization 5.2 is the MaxAllocation[ ] function. It is designed for allocation problems such as arise in investment, where a fixed amount of money is to be allocated across a series of investment options and the return on the investments is to be optimised. The path-following algorithm used can efficiently handle problems over a thousand variables. This function is ideal for quadratic programming, investment allocation, and hedge-fund creation applications.

Another useful command in Global Optimization 5.2 is the GlobalPenaltyFn[ ]. This is a hill-climbing algorithm for nonlinear functions with non-analytic equality and inequality constraints. It is designed to be robust to local minima and to solve problems with hundreds of variables. No derivatives are required, and the user-defined objective function can even be non-differentiable. This means, for example, that the 'function' can be a black box consisting of a set of inputs and an output that is to be optimised. Multiple starts allow the user to find multiple minima if they exist. Neither bounds nor a close initial guess are needed. For example, the problem with finding the global minimum of the ashtray function described above is that the minimum is at where the function is indeterminate. Global Optimization's[8] GlobalPenaltyFn[ ] finds the minimum exactly.

Both Maple (see www.maplesoft.com/products/toolboxes/globaloptimization/) and Mathematica users (see www.wolfram.com/products/applications/mathoptpro/) can avail of an add-on for solving multivariate global optimisation called MathOptimizer[9] .A Maple version of this package is called the 'Global Optimization toolbox'. It can carry out global and local optimisation of nonlinear problems with hundreds of variables and constraints. MathOptimizer consists of two core solver packages. One of these packages carries out a deterministic and stochastic global search in a given range.

It can utilise three strategies in this global search - classical branch-and-bound, a single-start random search algorithm a single-start random search algorithm, and an adaptive multi-start random search. The second package is used for a precise local search. There is also a solver-integrator that allows the packages to be used individually or in a combined attack.

Recently Loehle[10] (www.addlink.es/pdf/AGDWeb1187.pdf) compared Mathematica's built-in optimisation functions with those provided by Global Optimization[8] and MathOptimizer[9].

[1] D. Swade, The Difference Engine - Charles Babbage and the Quest to Build the First Computer. Viking, 2000.

[2] H.G. Kahrimanian, Analytic Differentiation by a Digital Computer. Master's Thesis, Temple University: Philadelphia, Pennsylvania, 1953.

[3] J.F. Nolan, Analytic Differentiation on a Digital Computer. Master's Thesis, Mathematics Department, Massachusettes Institute of Technology: Cambridge, Massachusettes, 1953.

[4] M.J. Wester, Computer Algebra Systems - a practical guide, John Wiley & Sons Ltd., 1999.

[5] S. Steinhaus, Comparison of mathematical programs for data analysis (Edition 4.42), www.scientificweb.de/ncrunch/, 2004.

[6] F. Bornemann, D. Lauie, S. Wagon, and J. Waldvogel, The SIAM 100-Digit Challenge - A Study in High-Accuracy Numerical Computing. SIAM, 2004.

[7] 7th Conference on Real Numbers and Computers (RNC 7), LORIA, Nancy, France, 2006.

[8] C. Loehle, Global Optimization 5.2, Loehle Enterprises, 1258 Windemere Ave., Naperville, IL 60564, USA, 2006.

[9] J.D. Pinter, MathOptimizer Professional 2.0, Pinter Consulting Services, Inc., 129 Glenforest Drive, Halifax, NS, Canada B3M 1J2, 2006.

[10] C. Loehle, Global optimization using Mathematica: A test of software tools, Mathematica in Education and Research, vol. 11, pp. 139-152, 2006.