The geothermal sector is a hot topic of active research within the growing renewable energy industry. Yet the detection and extraction of geothermal resources contains many complexities and, while simulation and modelling helps to address some of these challenges, there are still issues to overcome.

In recent times, these challenges have been largely addressed by multiphysics simulations, which match the multiphysics nature of geothermal resource detection and extraction from shallow to deep subsurface levels.

For example, simulation and modelling has helped to reduce the high exploration and high investment costs for the sector, and mitigate the risk of failure during the extraction and drilling/stimulation phase of a geothermal project. Simulation and modelling has achieved this through improvements in resource assessment and forecasting, optimising extraction technologies and techniques, as well as mitigating the environmental impact.

Seismic interpretation is often the first simulation that takes place in geothermal oil or gas exploration, as Rick Watkins, regional manager at Altair, explained: ‘Fundamentally, it is reverse-engineering the behaviour of waves traveling through the subsurface rock. The waves are generated by thumpers on the ground/water surface and their reflections are captured by sensors.’

There are a few commercial codes for this (SeisSpace PROMAX and Landmark are two of the dominant players) but there are many individual seismic processing companies, in addition to operators, that have their own proprietary interpretation codes. Watkins said: ‘For these applications, the modelling tools are used to interpret what the simulation produces, rather than the other way around. There can be a lot of variability in the models generated at this step in the process. The companies and groups running these applications tend to be highly invested in High Performance Computing (HPC) because of the scalability of this operation.’

‘As such, integration with a HPC workload management system, such as Altair’s PBS Works suite, can be very important to the overall performance of the simulation. As hybrid- and cloud-computing become more prevalent, the benefits of integrations that enable remote visualisation, job submission and cloud management are greatly amplified because the simulators are able to access tremendous amounts of compute when they need them (as long as the input data can be provided to the data centre economically),’ he added.

This capability to link simulations with the computational power required to investigate potential geothermal-rich sites, and then use further modelling to optimise the extraction of these resources, has opened the door to more cost and time effective geothermal energy production in the field.

Hydraulic fracturing

Hydraulic fracturing, more commonly known as fracking, is a method to increase the production of oil and gas from certain types of geological structures. The process involves drilling down into the rock and injecting a high-pressure water, sand and chemical mixture to release the oil or gas held inside shale rock.

While this process is easy to describe, the mechanics involved are more complicated. Andy Cai, applications engineer at Comsol, explained: ‘Given the multiphysics nature of fracturing involved when there is fluid flow through formations within the earth, many companies and researchers have turned to multiphysics simulation to study this phenomenon. There is a need to couple fluid flow, heat transfer, structure mechanics, as well as acoustics.’

Simulation often serves as the basis of analysis in this area because the physical structures involved are hundreds or thousands of metres under the earth’s surface, making them difficult to test in the real world environment. Cai said: ‘After setting up the physics properties, boundary conditions and meshes for the model, the analysis is ready to run. Extracting measurements such as efficiency and energy flux can be determined during simulation, or via post-processing after simulation.’

When investigating a potential fracking site, geophysicists characterise the underground formations by detecting and analysing acoustic waves from seismic activity, such as earthquakes. While the acoustic waves can travel long distances, they do not provide the level of detail required about the formation’s properties, or track the water (known as pore water) travelling through the formation. Research from the Colorado School of Mines suggests that electromagnetic disturbances associated with seismic activities could provide this missing information. However, electromagnetic waves do not propagate as far as acoustic waves, so theoretical models and lab experiments complemented by multiphysics simulations can identify and track pore water.

The team at the Colorado School of Mines created a system to locate fracking fluids by investigating these electromagnetic disturbances. They simulated fracking events and produced synthetic seismograms and electrograms by linking COMSOL Multiphysics and MATLAB. The research involved a physical experiment in which saline water was pumped into a hydraulically-fractured porous cement cube under high pressure.

The underlying physics, such as the hydromechanical equations, were well-defined in the software, so time-consuming calculations were not necessary. The team could iterate and develop a way to track underground fluid flows to better map and understand subsurface formations and dynamics. An obvious application here is to track the fracking fluids used in this oil and gas extraction technique.

Not only does a well-benchmarked numerical model such as this bring down the upfront cost of site planning, development, and monitoring, you can also run the tests on a computer in a much shorter time scale than in an on-site experiment, according to Cai, who added: ‘The results of the simulation are also a great resource for marketing and collaboration, as one can compare all historical data with ongoing project metrics and predict the output of the next stage in a single model and platform.’

Fracking extraction

There are many complicated phases involved to safely and successfully extract oil and gas from the earth’s surface during fracking. These include: drilling a useable borehole, providing a conduit from the underground reservoir to the surface, managing the flow of fluids from the reservoir to the surface and the disposal of waste products, such as reinjecting untreatable fluids back into the earth’s surface.

To accurately model this range of critical factors, ExxonMobil used Dassault Systemes’ Simulia applications to develop a fully coupled formulation for hydraulic fracture growth using two advanced finite element methods.

A cohesive zone method (CZM) was developed, in which the fracture trajectory is confined to a plane, and an extended finite element method (XFEM) was also developed where the fracture trajectory is entirely solution dependent. Additional models were also implemented in the Abaqus application to account for the inelastic deformation seen in soft rock.

ExxonMobil then used Simulia, alongside results from its in-house experimental capabilities, to create 2D and 3D models of many different aspects of controlled hydraulic fracturing in rocks. This has helped ExxonMobil manage and mitigate the risks associated with drilling into the earth’s surface. It has also helped ExxonMobil develop recovery schemes to make production more economical. Bruce Dale, chief subsurface engineer for ExxonMobil, said: ‘In the decades ahead, the world will need to expand energy supplies in a way that is safe, secure, affordable and environmentally responsible. 3D simulation powers innovative solutions by building on the fundamentals to deliver energy in the 21st century.’

Visualising sites

As the ExxonMobil case study demonstrates, visualisation is an important tool in the geothermal sector as it allows engineers to understand, investigate and optimise inaccessible sites that are, potentially, tens of kilometres under the earth’s surface.

Geophysical exploration company Dewhurst Group specialises in on site selection to pinpoint geothermal sources for electric power generation. The group conducts resistivity imaging surveys using broadband magnetotelluric (BMT) and low frequency magnetotelluric (LMT) instrumentation to image the earth’s subsurface landscape to understand the underlying tectonics that might drive a geothermal source .

The Dewhurst Group recently investigated a site at Jemez, New Mexico. During the first stages of the exploration project, the group gathered information on the survey location, which involved collecting data from 150 stations over an area of approximately 37 km2.

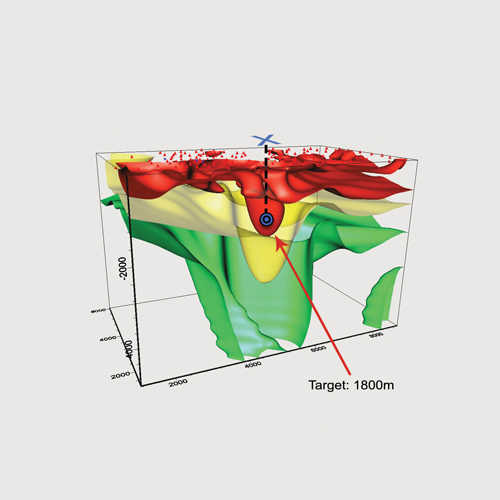

The group then analysed and modelled these results using the Golden Software Voxler application to generate 3D renderings of all the 1D, 2D and 3D inversion results. This collection of models were then integrated to generate a resistivity imaging model of earth’s subsurface, which is shown in Figure 1, of the Pueblo region of Jemez.

Figure 1: A 3D image of subsurface resistivity at the Pueblo of Jemez. Red shows areas of low resistivity, often associated with geothermally altered cap rock or a concentration of geothermal brine. Target area shown at a depth of 1800m and "X" marks the spot to drill.

These visualisation tools helped Dewhurst to locate an optimal drilling depth and target location. The resistivity results were later confirmed by subsequent exploration efforts, including a seismic survey. Blakelee Mills, CEO at Golden Software, said: “Whether it’s mapping the geothermal area, graphing the estimated geothermal production, or modelling the subsurface area of interest, our tools facilitate a more thorough understanding of the data at hand.”

Modelling and simulation provide a vital link to investigate the geothermal resources that exist under the earth’s surface, without research teams carrying out expensive and, potentially, dangerous experiments to find and extract these much-needed resources.

The next stage is to provide further links with the on site teams to bring these simulation and modelling processes where they are needed so engineers can make smart on-site decisions without the need to go back to the research scientists.

For example, simulation apps can be built by modelling experts with Comsol Multiphysics and deployed with a local installation of Comsol Server, which can be accessed via a web browser. The engineers in the field can then connect and run apps to make decisions based on simulation results. ‘Integration of the numerical simulation with field work is very promising and still requires more user-friendly tools to achieve, which will result in more smart self-adaptive tools for detecting and extracting energy resources,’ Cai added.

Over the last few decades, the multiphysics nature of the simulation and modelling software has mirrored the multiphysics nature of geothermal detection and extraction to optimise these processes and keep the industry evolving.

In a similar vein, the software now needs to evolve and match the needs of the geothermal energy sector from an accessibility perspective. To allow the continued detection and extraction of resources from the world’s most inaccessible regions, simulation and modelling must be accessible to all users, regardless of their level of expertise or physical location.

In other words, the modernisation of geothermal simulation tools is the next step to optimise this sector and ensure its ongoing success to meet the world’s ever-growing energy demands. l